Ed Angel

Professor Emeritus of Computer Science

University of New Mexico

http://www.cs.unm.edu/~angel

[email protected]

[This is a guest post from Ed on a subject near and dear to my heart, online learning. – Eric]

Recently I finished teaching a Coursera MOOC entitled Interactive Computer Graphics with WebGL. Having taken Eric’s excellent three.js course with Udacity, I was interested in doing a very different course. The experience was interesting, at times exasperating, ultimately rewarding and a lot of work. Here are some of my observations, many of which echo some of Eric’s on previous blog posts, and many that relate to the present state of MOOCs.

First, something about me and my course. I’m the coauthor, with Dave Shreiner, of the textbook Interactive Computer Graphics, which is now in its seventh edition. It has been the standard textbook for in computer graphics for students in computer science and engineering. For the seventh edition we switched from OpenGL to WebGL, which has turned out to be an excellent decision. We’ve also done both OpenGL and WebGL SIGGRAPH courses, which are now on Youtube at SIGRRAPH U. Given the explosion of interest in WebGL over the past year, I decided to do a MOOC using WebGL. For those of you unfamiliar with WebGL or interested in what I do in my academic course, there’s lots of sample code here that was also available to the students in the MOOC.

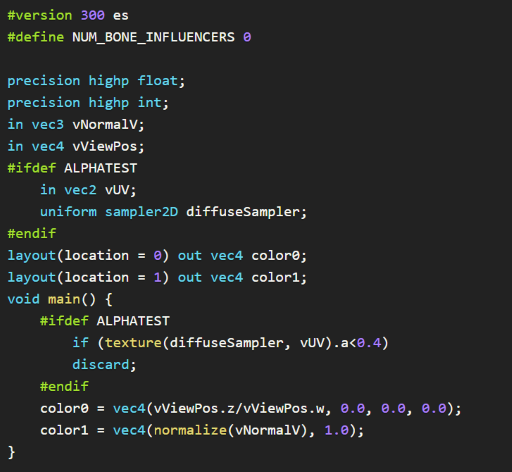

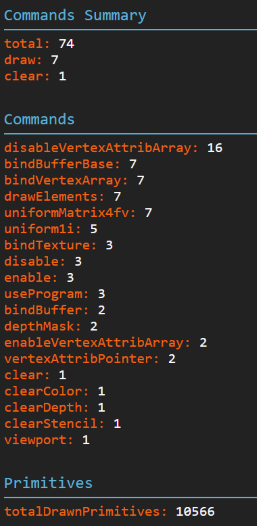

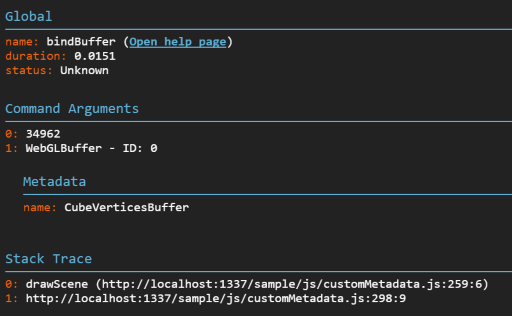

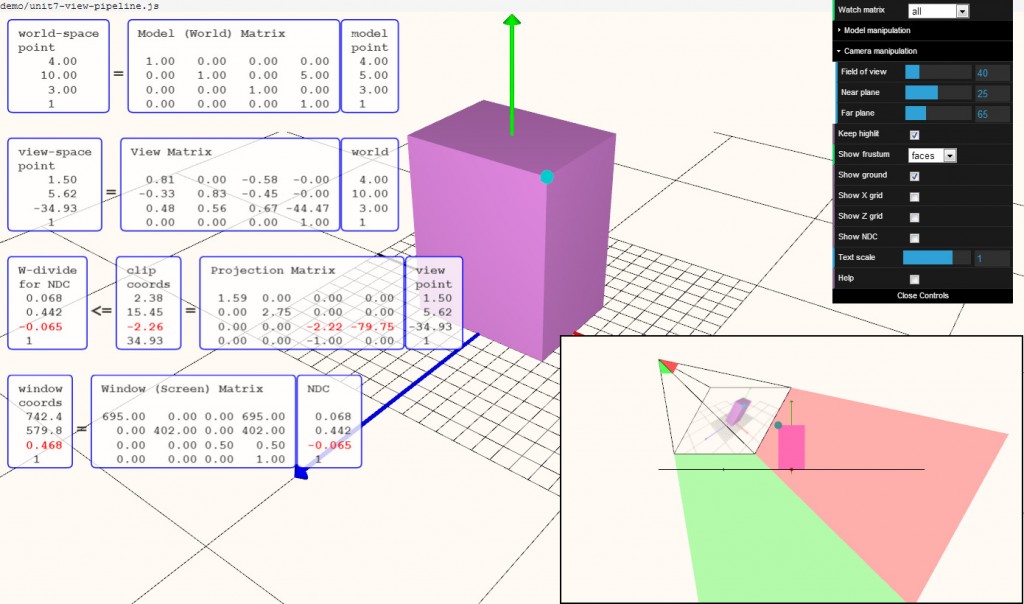

What we teach under the title of Computer Graphics can be very different depending on the audience. For those in the application world, such as the CAD community, who want to use computer graphics at a high level and not worry about writing shaders (or even knowing about shaders), three.js is a powerful tool built on top of WebGL. Users of three.js can reap many of the advantages of WebGL without writing a single line of WebGL code. On the other hand, students in Computer Science and Computer Engineering focus on “what’s beneath the hood”: shaders, algorithms, architectures. The two MOOCs, Eric’s and mine, are completely complementary and pretty much at the same level.

Course Outline

A fundamental premise of my 30+ years of teaching computer graphics is that students should be able to write complete applications as early as possible. While this philosophy is fairly common in university courses, it very uncommon in programming MOOCs. There are many reasons for this. The two key ones are the time needed to do a complete program and the problem of grading thousands of assignments. Nevertheless, I did not want to teach the course unless I could require complete programs, each one satisfying a set of requirements.

Because WebGL runs in all recent browsers, students needed only have access to a public website where they could put their assignments. Then they only had to submit the URL to let the graders run the code and see the source. I referred the students who did not have public websites to codepen.io. This mechanism worked wonderfully. The fact that the applications were on public sites never became an issue.

Here are the five assignments, with some student postings folded in:

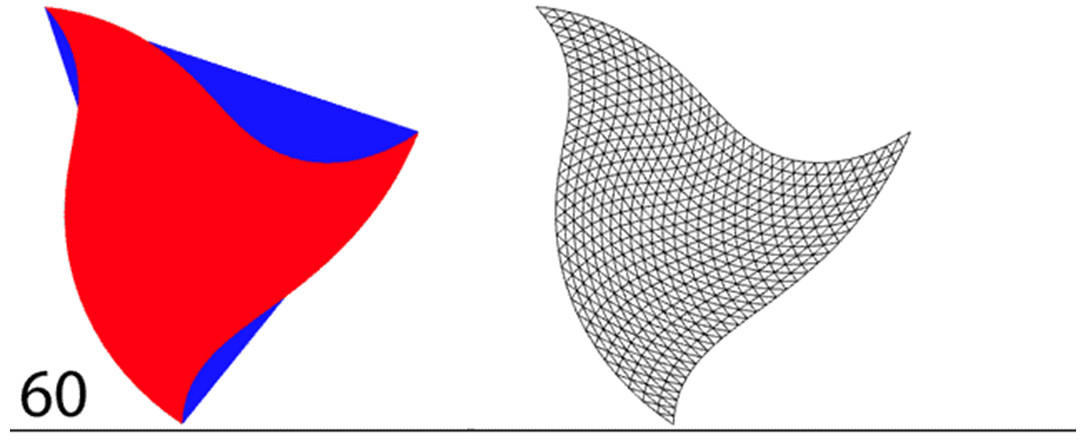

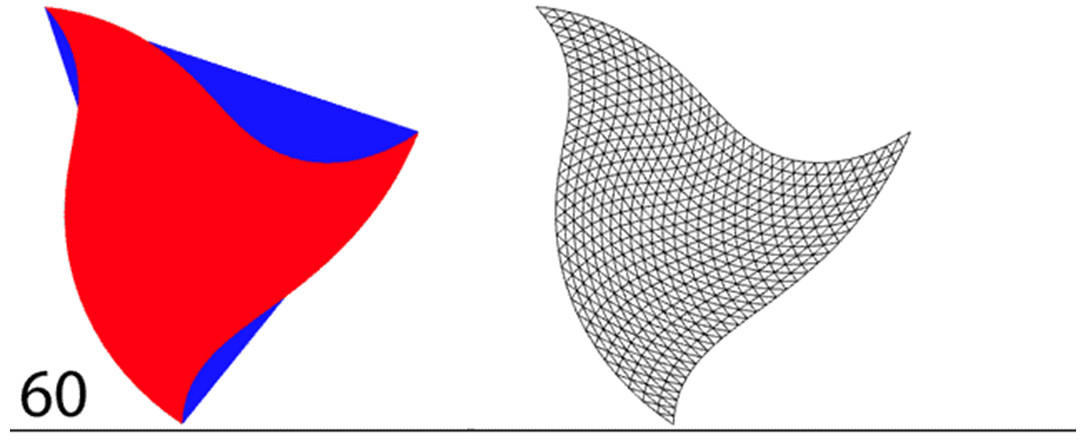

1. Tessellation and Twist: Twist is rotation, where the amount of rotation depends on the distance from the origin. It is can best be done in a vertex shader. The assignment starts with a single triangle centered at the origin. Twist applied to its three vertices does not result in a very interesting display. However, if we tessellate the triangle by recursive subdivision, the vertices of the smaller triangles are different distances from the origin, which creates a display in which the filled triangles have a curved outline. I give them some examples so that they need not write a lot of code to do this problem. It not only serves as test as to whether they have sufficient background for the course, they get to see what even a simple shader can do.

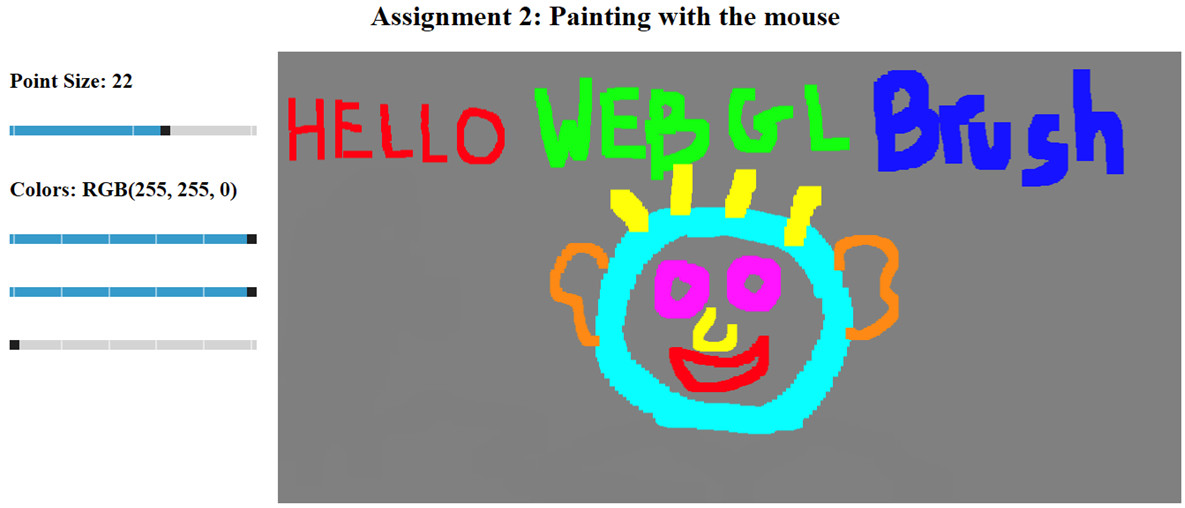

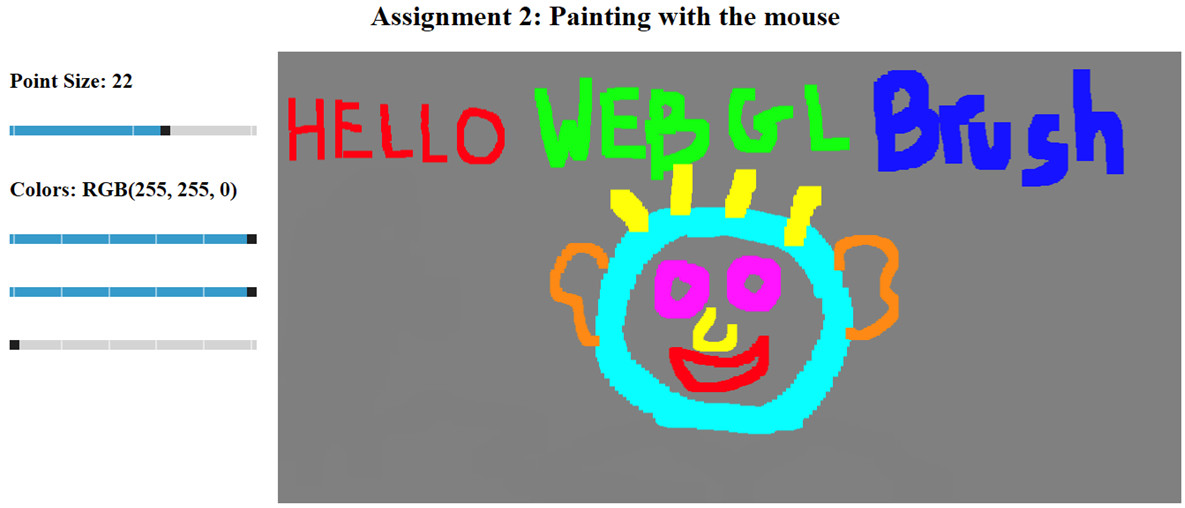

2. Line Drawing: The minimum requirement was to create an application that rendered line segments following mouse clicks. There were many options, such as letting the user change the line thickness via a menu. The main goal was to bring in interactivity through event listeners and involved both JS and a little HTML5.

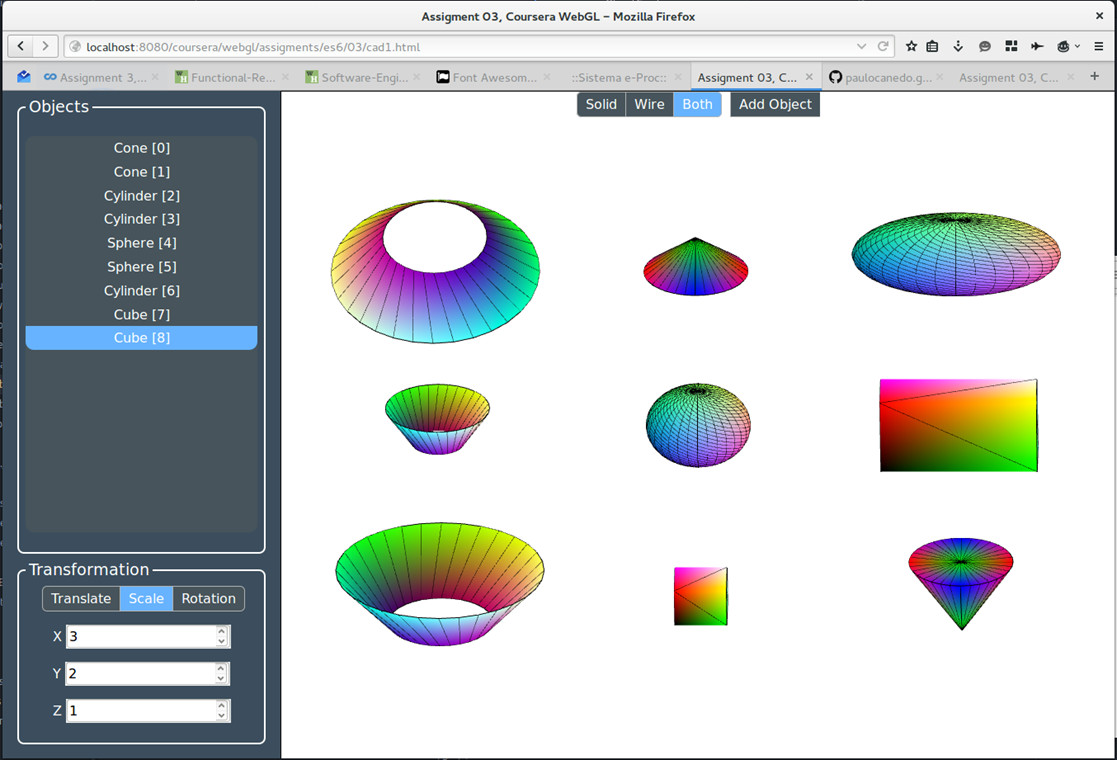

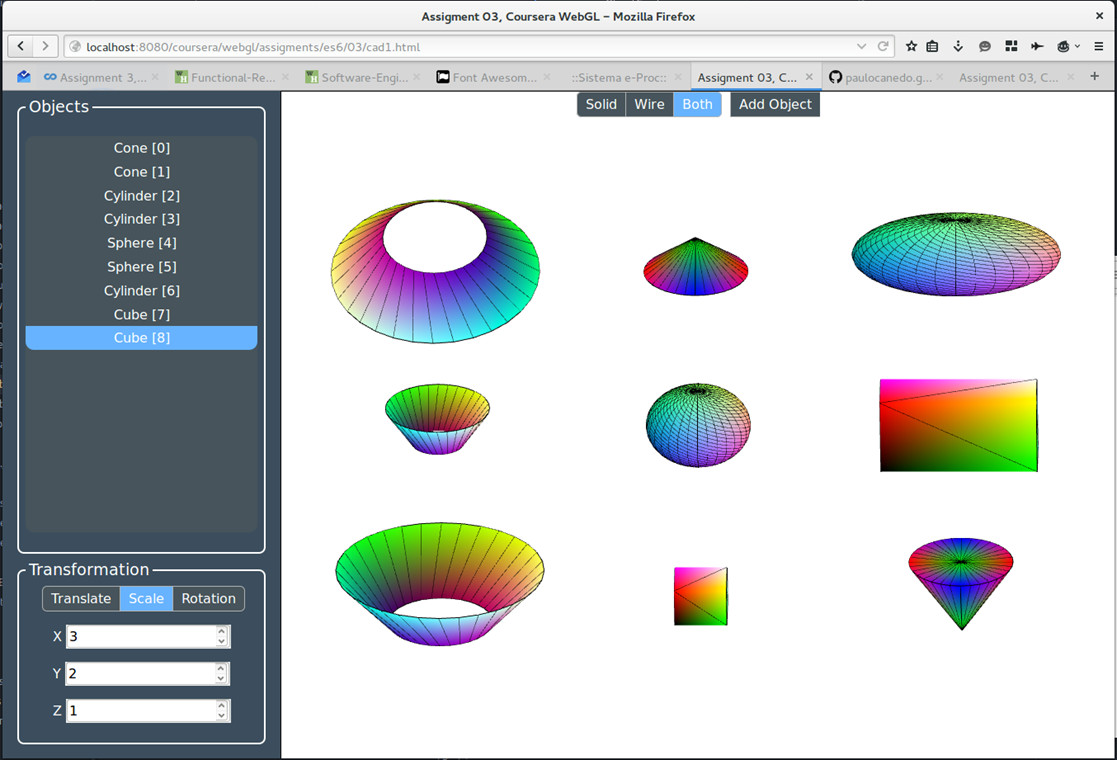

3. A Mini CAD system: Create a scene by adding objects to a scene. Minimally, the application had to have two object types and the instance transform was to be determined interactively. There was code available for spheres and cubes but they were encouraged to add cylinders and/or cones. Because we had yet to cover lighting most students built applications that rendered each 3D object twice, once filled and once with lines.

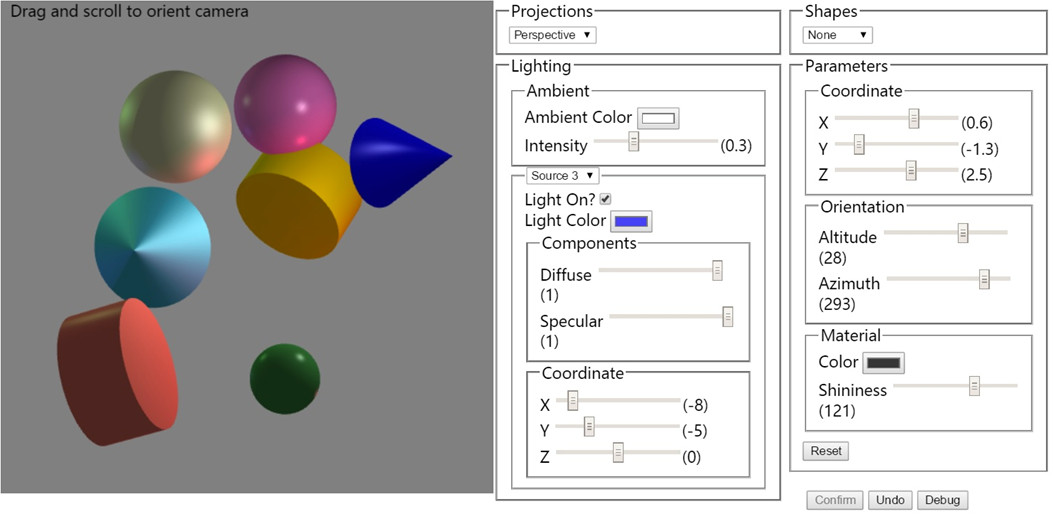

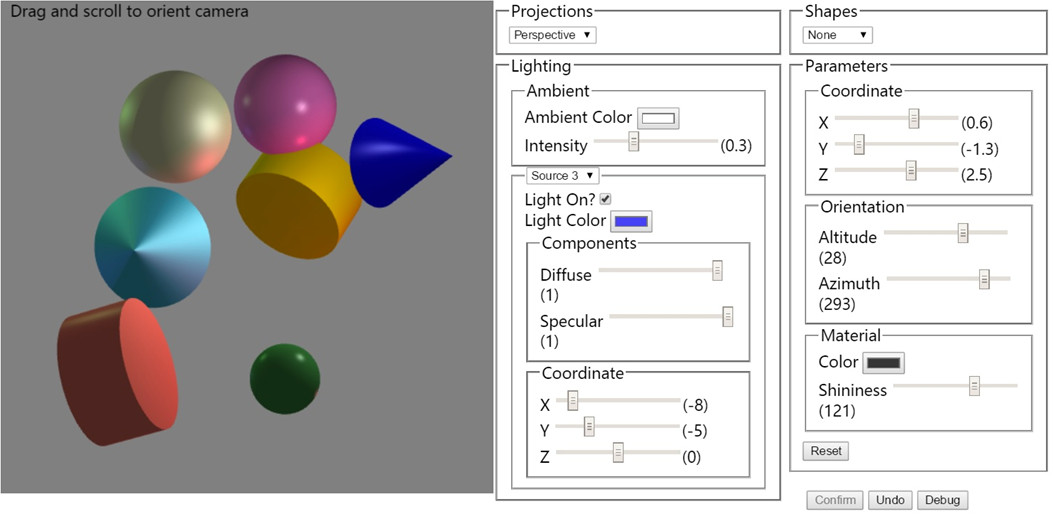

4. Adding Lighting: Students had to write shaders to add lighting to their CAD systems. They were encouraged to compare implementing per-vertex lighting with per-fragment lighting.

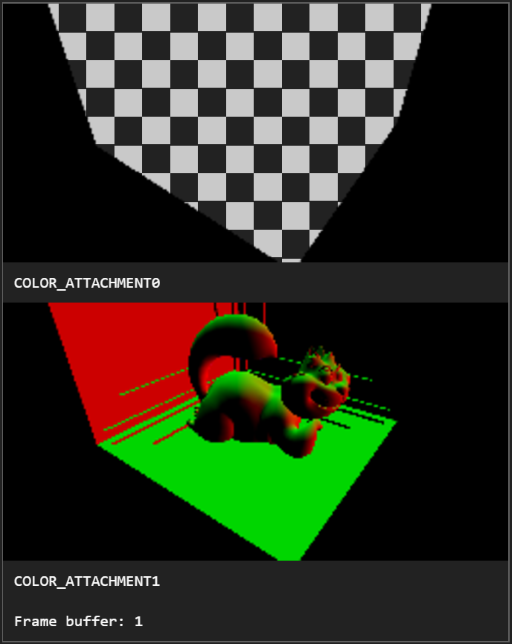

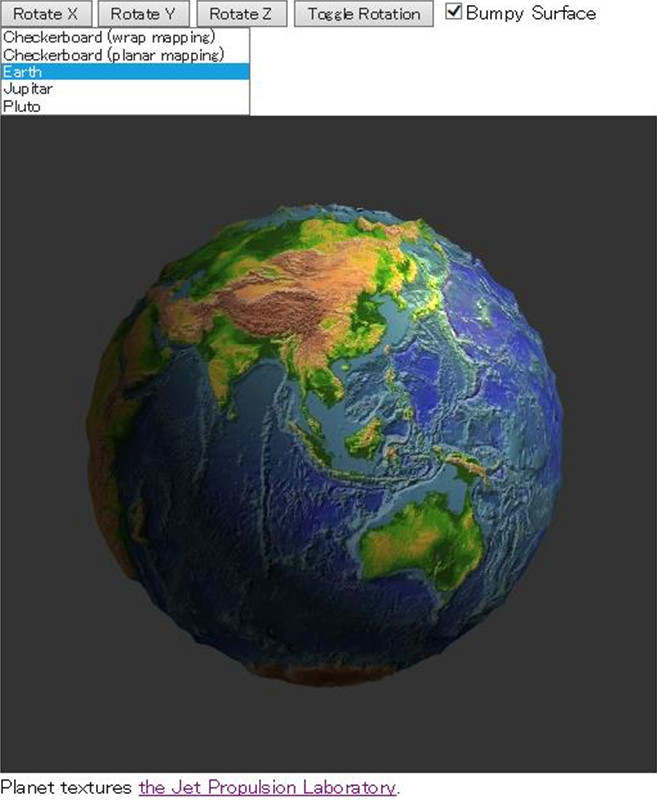

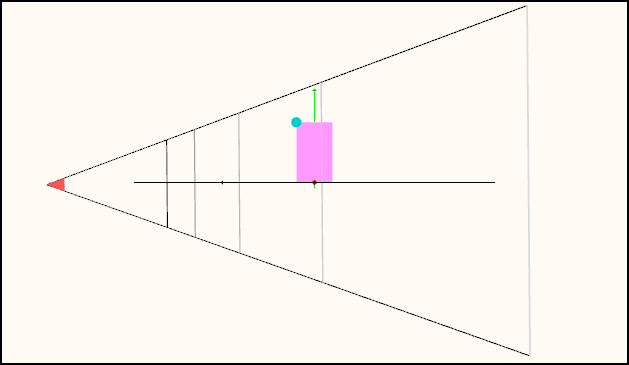

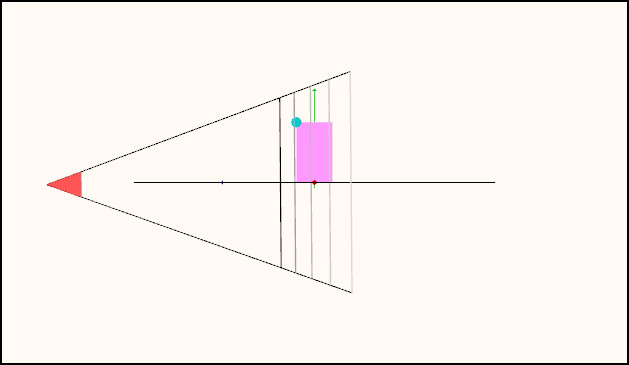

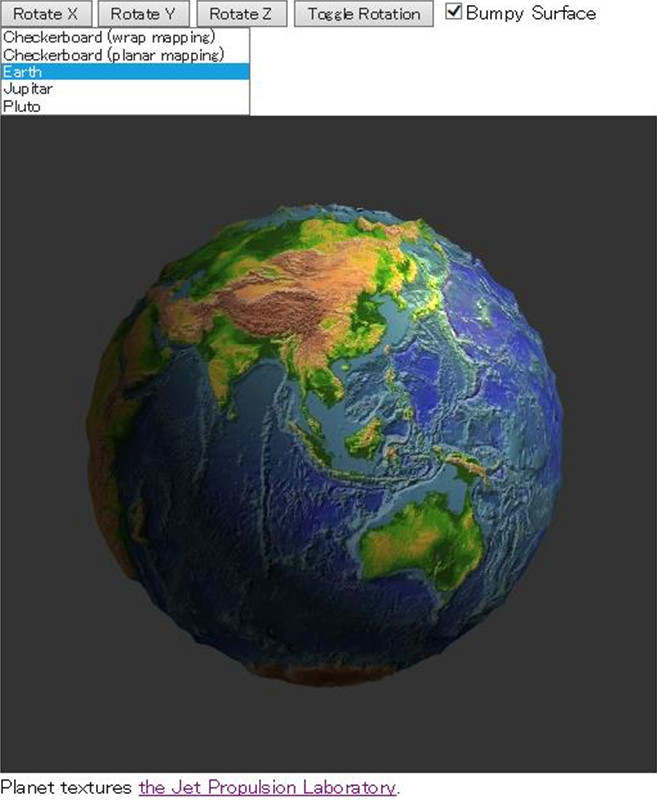

5. Adding Texture Mapping: Applications had to add textures to a sphere. They were asked to use both an image and a generated checkerboard pattern as textures and to use two different methods of assigning texture coordinates.

Assignment 3 proved to be more difficult than I anticipated and if I did it again I’d probably eliminate or simplify Assignment 2 and simplify Assignment 3. Students who went through the whole course loved the last couple of assignments and the freedom they had to experiment. They even created web pages to share their results. See screen shots here.

The Numbers

Initially about 14,500 signed up for the course. However, only 5,500 ever watched even the first video. I still can’t figure out why 9,000 would sign up and then never even take a look. After the first week, I had about 2,500 remaining. Fair enough, since the first week’s videos enabled them to see if the content was what the wanted and if they had the time and background to continue.

Of the remaining 2500, about 1000 went through all the videos. Many of them did at least some of the projects, or even all of them, but didn’t care about getting a certificate. In the end, 282 participants earned certificates, including, I believe, all the ones who paid for a verified certificate.

I don’t know what is the best way to evaluate these numbers, Certainly using 282 out of 14,500 makes little sense. Personally I prefer 1000 out of 2500. The 2500 represents people who really were interested and the 1000 went all the way through in one way or another.

Working with Coursera

My institution, the University of New Mexico, was one of the first public institutions to partner with Coursera. Having followed Eric’s course and his blog about doing a course with Udacity, I was curious about the differences. And there are many. Perhaps the most significant is that Coursera leaves virtually all the course development and support to the partner institution. Since UNM, like most public institutions, is under considerable financial stress, the course was pretty much a do-it-yourself (unpaid) venture. With the exception of 2-3 minute videos we recorded on campus to introduce each week’s lessons, I recorded all the videos on my iMac with Camtasia. These were later minimally edited by UNM’s Extended Learning staff. As weird as it may seem, one can actually get pretty good at giving an animated presentation talking to your computer. I had a similar experience to Eric in finding that making changes to a video is extremely difficult. Since the each video is fairly short, I learned to just rerecord a video instead of trying to cut and paste within an existing one.

The major problem I had was dealing with Coursera’s software. Some crucial parts, such as keeping the courses available 24/7 and managing the discussion forums, worked really well. However, there were many other problems that ate large amounts of time, both mine and the students’. These included lack of and bad documentation, unannounced changes to the website, rigidity of the software, and unresponsiveness to problems. It was interesting that many of the students were aware of these issues from previous courses but still were taking many MOOC courses.

MOOCs and Professional Development

If I compare my course to my (or any) regular academic CS course, it’s not even close in academic content. How can it be otherwise when there’s no book allowed, there’s a lower level entry requirement, and not enough time to assign the amount of work we would expect in an academic course?

As a professional development course, it’s more interesting. I’ve taught well over 100 professional development courses, both in person and online, to audiences ranging from the twenties to the hundreds. The majority were in a concentrated four-day format. I realized after I had finished the MOOC that the hours of video in the MOOC were very close to the amount of lecturing I would do in an intensive four-day course. But I also realized that the MOOC is a superior method for professional development. Besides the fact that it is essentially free, the material is spread over a longer period, allowing participants flexibility in when they learn and giving them time to do serious programming exercises. Looking at the analytics available from my course, it’s clear that the vast majority of the learners have figured this out and are there for professional development.

Why are State Universities and Colleges doing MOOCs?

My experience, reinforced by talking to participants and other MOOC instructors, led me to question why UNM or any state institution is involved with MOOCs. While I can understand the desire to try new educational methods and the idealism that many of us believed would enable us to provide first class technical education to the developing world, two things should have pretty obvious from the beginning. First, the business model under which we have done our MOOC courses makes no sense; there had to a lot of self-delusion to believe that verified certificates would bring in enough money to cover our expenses. Out of 14,500 “learners” who initially signed up for my course, all of 200 signed up for verified certificates, generating $10,000 in revenue, revenue that is shared between Coursera and UNM. That’s not going to pay even minimal costs.

What’s more troublesome is that MOOC courses are not academic courses. They’re not even close. So why, when public institutions are facing all kinds of financial problems to support their own students, are they putting resources into professional development courses for people outside of their own regions? Some institutions have recognized this problem. I note that many of the offerings by Coursera are now coming from self-supporting Continuing Education/Professional Development units of Universities and not from the academic units.

MOOC Computer Programming Courses

There’s a level of delusion that I’ve seen with almost all MOOC programming courses (Coursera, Udacity, Code.org, Khan, Codecademy). These courses claim to teach a programming skill in a few weeks with the learner spending only a few hours a week. What happens in these courses is that the learner never writes a complete program but rather changes a line or two of code or adds a few lines to an existing program. Easy to check and grade by computer but in the end the student cannot write a complete program using her new skill but is deluded into believing she can. After all, she has a certificate of completion; often for many such courses. This becoming a serious and more widely recognized problem in the real world, which is getting filled with “programmers” who can’t program but have been told they can based on their experience with online courses.

When I decided to do my MOOC, I was adamant that it would require participants to design complete programs from a set of specifications. In spite of the clear prerequisites for the course, a majority of the participants could not even get started on the simplest of my assignments, one that could have been done by changing four or five lines of code in an example I gave them. Most of them couldn’t even take the problem statement and figure out that this was all they had to do. On the other hand, the participants who came in with real programming experience absolutely loved the course and did some remarkable work. Through the discussion forums I was able to establish relationships with a number of these students and these interactions were as rewarding as any in my 40+ years of teaching computer courses.

How I Would Do It Again If I Were To Do It Again

There’s a lot of if’s here but it’s conceivable that I might, with adequate support this time, do it again. It would involve almost as much work the first time since I’d rerecord the videos but what I have in mind might be a step towards a more stable MOOC that could break down some of the barriers between academia and professional development. I see the MOOC as remaining at 10 weeks with much the same outline. I’d start it at the same time as an academic semester. Students who want academic credit would also register for my regular online computer graphics class. All students would use the MOOC videos for the first 10 weeks but those registered for the University course would have additional reading and variants on the MOOC programming assignments. I would also meet with these students either live or via video conferencing, thus making the course more of a flipped classroom. After the 10 week MOOC was over, I would continue working with the university students on projects and advanced topics for the rest of the 15 week semester.

In addition, if the University could figure out how to do this and what to charge, I’d open the academic course to students outside the university who could take the course as non-degree students at a reduced tuition. Such credit would be transferable to other academic programs. Exploring such a format might move us in a direction that helps state institutions with their financial issues, leads to a working business models for MOOC providers, and at the same time, fulfills many of the idealist goals that many of us have for MOOCs.